1. Introduction

n the recent years use of remote sensingsatellite data for urban planning, military,weather forecast, robotics, automatednavigation system, remote surveillance hasincreased by many fold in addition toconventional applications such as naturalresources management. These applications involve acquisition, communication, storage and processing ofterrible number of images of earth surface.This situation is becoming more aggravatedbecause of increased pixel resolution, graylevel resolution, band resolutions and reducedrepetition cycle of satellite. All of these development demands more band width fordownlink lines of satellite in addition to moredisk space for storage.

In communications, data compression techniques under the name hood of imagecoding are widely used to reduce thecommunication bandwidth bottlenecks duringdata communication. For instance, JPEGstandard is used for still image compression [1], MPEG is used for video compression [2]. Also, while communicating data from satellites toground stations some compression methods areused [3].

A typical image processing system isas shown in Figure 1 that is commonlyemployed for remote sensing applications. It isvery common that most of the applicationscientists using original image data for theirprocessing. In majority of remote sensing applications, results of classification are theultimate interest [4].

In this study, we propose to study howthe classification results will vary if we usecompressed image data instead of originalimage data. Usually applications such as landuse classifications assumes samples of a groupwill be having small random variations in theirpixel values while samples of different groupsto be having contrastingly different pixelvalues. Because of the increased pixel and graylevel resolutions, samples of a group may behaving similar pixel values. Moreover, they willbe having high level of spatial auto correlation.Evidently, majority of compression methods exploits this auto correlation to achieve highcompression ratios with acceptable PSNR (PeakSignal to Noise Ratio) values [5].

Our proposed Algorithm is based onfiltering concept [6]. In this Algorithm insteadof sending the original image, we send thefiltered image. In general, the number ofuseful DCT coefficients will become more if 8x8image blocks contain lot of variations in values,otherwise only few DCT coefficients will bemeaningful. If we apply filtering on image itgets smoothened, that is variation of the pixelvalues of a block reduces. It is attractive forpoint of view of compression as the number ofmeaningful DCT coefficients are going toreduce. Thus we are achieving compression benefit. We have compared the compression performance of our algorithm with conventional JPEG algorithm with variety ofmulti band images.

Also in this study, we evaluate theclassification performance of popularclassification algorithms like Maximum Likelihood, Mahalanobis and Euclidian distanceby taking original image data, conventional Our paper work is organized as follows.Section II introduces the standard JPEGalgorithm. Section III explains proposedcompression algorithm. Section IV illustratesthe selected classification algorithms. Section Vincludes details about our experimentationsand results. Section VI contains conclusionsabout our research work II.

2. Brief Overview of JPEG Encoding/ Decoding System

JPEG is a well known standardized image compression technique. JPEG loses informationso the decompressed picture is not the same asthe original one. The main reason for use ofJPEG is to reduce the size of image files.Reducing image files is an important procedurefor transmitting files across networks orarchiving libraries. Usually JPEG can removethe less important data before thecompression; hence JPEG is able to compressimages meaningfully, which produces a hugedifference in the transmission time and the diskspace. Figure 2 shows the basic Architecture ofJPEG compression system. Here is a briefoverview of the JPEG compression system. [5] The image is first subdivided into pixelblocks of size 8X8, which are processed left toright, top to bottom. As each 8X8 block or subimage is encountered, its 64 pixels are levelshifted by subtracting the quantity L/2, whereL is the Gray level resolution of the image . The2-D Forward Discrete Cosine Transform (FDCT)(Eq-1) [5]of the block is then computed,quantized using 64 corresponding step sizevalues from the quantization table in Figure 3 [7]. After quantization the DCT coefficientsare rearranged in a zigzag sequence order as shown in the Figure 4. [7] Since the one-dimensional reorderedarray generated under the zigzag pattern ofFigure 3 is qualitatively arranged according toincreasing spatial frequency, the JPEG codingprocedure is designed to take the advantage ofthe long runs of zeros that normally result fromthe reordering. In particular, the nonzero ACcoefficients (the term AC denotes all transformcoefficients with the exception of the zeroth orDC coefficient) are coded using a variablelengthcode that defines the coefficient's valueand number of preceding zeros. The DCcoefficient is difference coded relative to theDC coefficient of the previous sub image. The decompression process performs aninverse procedure. It decodes the Huffmancodes. Then, it makes the inversion of theQuantization step. In this stage, the decoderraises the small numbers by multiplying themby the quantization coefficients. The resultsare not accurate, but they are close to theoriginal numbers of the DCT coefficients. AnInverse Discrete Cosine Transform (IDCT) (Eq.4) [7] is performed on the data received from theprevious step. Finally add L/2 to each subimage. Place the sub images in their correctpositions. (4) The error between the original imageand reconstructed image is calculated in termsof Peak signal to noise ratio (PSNR) = 10 log10 Mean filtering [8] is a simple, intuitiveand easy to implement method of imagesmoothing i.e. reducing the amount of variationbetween one pixel and the next or surroundingpixels. It is often used to reduce noise inimage. The idea of mean filtering is simply toreplace each pixel in an image with the meanvalue of its neighbors including itself. This hasthe effect of eliminating pixel values which areunrepresentative of their surroundings. Usually,3x3 neighborhoods of pixels are consideredwhile calculating mean filtered value of anypixel.

(L2/MSE) (5)(6)? = ? = ? ? ? ? ? ? + ? ? ? ? ? ? + = 1 0 1 0 2 ) 1 2 ( cos 2 ) 1 2 ( cos ) , ( ) ( ) ( ) , ( N x N y N v y N u x y x f v u v u C ? ? ? ? for u, v = 0, 1, 2, . . . . , N -1 ? ? ? > = = 0 / 2 0 / 1 ) ( u for N u for N u ? ? ? ? > = = 0 / 2 0 / 1 ) ( v for N v for N v ? ?? ? = ? = ? ? ? ? ? ? + ? ? ? ? ? ? + = 1 0 1 0 2 ) 1 2 ( cos 2 ) 1 2 ( cos ) , ( ) ( ) ( ) , ( ?N u N v N v y N u x v u C v u y x f ? ? ? ? [ ]Median filter [9] is normally used to reducenoise in an image like the mean filter.However, it often does a better job than themean filter in preserving useful detail in theimage. Like the mean filter, the median filterconsiders each pixel in the image in turn andlooks at its neighbors to decide whether or notits representative of its surroundings. Insteadof simply replacing the pixel value with themean of neighboring pixel values, it replaces itwith the median of those values.

An outlier [10] is an observation that isnumerically distant from the rest of the data.In an image, a pixel value is very different fromits surrounding pixels, it can be called asoutlier.

From basic statistics, we know that apopulations sample values with someconfidence level can be given as mean ± C*P, where C is weighing factor (critical value) and P is standard deviation of the population. Table-1 shows the commonly used ConfidenceLevels and Corresponding Critical Values [11].In our outlier based algorithms, we take thesesimple confidence limits of normal distributionin deciding whether a pixel is outlier or not. Ifthe pixel is observed to be outlier with thegiven confidence level, we may retain else wemay take its mean filtered or median filtered value. ? Apply mean filtering with a little variation tothe given original image using 3x3 window. Foreach pixel, calculate average and standarddeviation of its neighboring 3x3 pixels. If apixels value is observed to be outlier (not inthe range of Mean ± C*P) then its value is takenas itself else mean is taken as its filtered value. ? Apply DCT on the outliermean filteredimage.

3. d) OutlierMedianDCT Algorithm

? Apply median filtering with a little variationto the given original image using 3x3 window.For each pixel, calculate median and standarddeviation of its neighboring 3x3 pixels. If apixels value is observed to be outlier (not inthe range of Median ± C*P) then its value istaken as itself else median is taken as itsfiltered value. ? Apply DCT on the outliermedian filteredimage.

4. IV.

5. Popular Classification Algorithms a) Maximum Likelihood Classifier

Let w 1 ,w 2 , . . . , w m denote m distinctpopulations (classes) with known d-dimensionalprobability density functions p 1 (X), p 2 (X), . . . .. p m (X), respectively. The a priori probabilitiesthat an observation is selected frompopulations w 1 , w 2 , . . . ,w m are denoted by q l ,q 2 , . . . ., q m , respectively [12]. According tothe Bayesian ML classification rule, assumingequal costs for misclassifications, a random ddimensional pixel vector X is classified as classw k (7) Assuming equal a priori probabilities for all theclasses, decision rule (7) becomes:

(8)In Equations ( 7) and (8), the probability densityp k (X) will be given as:

( ) Year ) , ( ?y x f q k p k (X) = max{q i P i (X)} for i = 1, 2 . . . ., m. p k (X) = max{p i (X)}, i = 1, 2 , . . . , m 2 / 1 2 / ) 2 ( 1 ) ( ? = k d k X p ? ? ? ? ? ? 1 )]. ( ) .( 2 / 1 exp[ k k T k M X M X X b) Mahalanobis Classifier37According to this classifier a ddimensionalrandom pixel vector (X) will be assigned to thegroup to which it is nearest [13]. Each group ischaracterized by its mean vector, which iscalculated from training data. Nearness isdetermined by the Mahalanobis distancebetween the group mean and X. Inmathematical terms, the same classificationrule can be represented as: (10) where i = (1, 2, . . . C) groups if d i (X)<d j ( X) forall j U i where (11) and M i s mean vector of i th group indicatesvector should be transposed.

-1 is the inverseof the pooled covariance matrix.

6. c) Euclidian Distance Classifier

According to this classifier a random ddimensionalpixel vector (X) will be assigned tothe group to which it is nearest [14]. Eachgroup is characterized by its mean vector,which is calculated from training data.Nearness is determined by the Euclideandistance between the group mean and X. Inmathematical terms, the same classificationrule can be represented as: (12) Where i = (1, 2, . . . C) groups if d i (X)<d j (X) forall j U i where (13) and M i is mean vector of i th group. T indicatesvector should be transposed V.

7. Experimentations and Results

For the purpose of experimental work,Landsat TM data from USGS data base"www.usgs.gov" is used. Confusion matrix [15] is used to assessthe accuracy of an image classification. Thestrength of a confusion matrix is that it identifiesthe nature of the classification errors, as well astheir quantities. In confusion matrix rowscorrespond to classes in the test set, columnscorrespond to classes in the classification result.The diagonal elements in the matrix representthe number of correctly classified pixels of eachclass. The off-diagonal elements representmisclassified pixels. The overall accuracy iscalculated as the total number of correctlyclassified pixels divided by the total number of test pixels.

Year i w X ? i (X) = (X-M i ) T ? -1 (X-M i d i w X ? i (X) = (X-M i ) T (X-M i dAnother measure which can be extractedfrom a confusion matrix is the kappa coefficient [16] which is a popular measure to estimateagreement in categorical data. The motivation ofthis measure is to extract from the correctlyclassified percentage the actual percentageexpected by chance. Thus, this coefficient iscalculated as (14) cm1,, cm2, ??? cmnare the column 1, 2 ????n marginals rm 1 ,rm 2 , ??? rm n are the row 1, 2 ---------nmarginals n is the total number of test pixels.

The higher the value of kappa, the betterthe classification performance. If all informationclasses are correctly identified, kappa takes thevalue 1. As the offdiagonal entries increase, thevalue of kappa decreases. For classification two data sets were used. Onewas a 1048 X 920 (Owensvalley image) LandsatTM with all 7 bands. The second data setcontained 500 samples of four ground types,Dense scrub, Rock, Forest and Open scrub of thesame scene. This second data set is used to observe the classification accuracy. All the 2000set patterns were classified simultaneously withthe Maximum Likelihood, Mahalanobis andMinimum distance classifiers. Classificationperformance of all the classifiers is displayed intables 4, 5 & 6. Figure 6 shows the Overallaccuracy of all the classifiers. It is observed thatclassification performance on proposedcompression images is almost same as JPEGstandard compression images and original images.

![Figure 3 : Quantization Matrix [7]](https://engineeringresearch.org/index.php/GJRE/article/download/926/version/100386/6-A-Critical-Performance_html/8255/image-3.png)

| 2 |

| I |

| XIII Issue XVI Version |

| ( ) |

| Experiments arecarried out under MS Windows XP | |

| version 2002,SP3 edition. The experimental system | |

| isequipped with Intel core 2 Duo 2.60 GHzprocessor | |

| with 1 GB RAM. Using ERDAS Imagine8.6 (copy | |

| rightsÃ?"1991-2002, Lieca Geo systems)Training sites are | |

| labeled. Programs are writtenin C language under | |

| Microsoft Visual Studio2005 version 8.0. | |

| We have carried out extensivesimulations with | |

| the selected images andproposed algorithms. Table 2 | |

| shows theCompression Benefit and PSNR values | |

| ofMeanDCT algorithm VsOutlierMeanDCTalgorithm. | |

| With all the images we found thatMeanDCT and | |

| 2 | OutlierMeanDCT algorithms havebetter compression |

| I | ratios as compared toconventional JPEG coding. The |

| XIII Issue XVI Version | PSNR loss inMeanDCT and OutlierMeanDCT algorithms isnegligible as compared to conventional JPEGcoding. While comparing MeanDCT and thecorresponding Outlier DCT, CompressionBenefits are observed to beMeanDCT>OutlierMeanDCT (for C=1.28 to 2.58).As the value of C increases in the Outlier,Compression Benefit increases. For C=3.08 to3.27 Compression Benefit in MeanDCT andOutlierMeanDCT is almost same. PSNR in MeanDCT<OutlierMeanDCT(for C=1.28 to 2.58).As the value of C decreases in the Outlier, |

| PSNRincreases. For C=3.08 to 3.27 PSNR in | |

| MeanDCTand OutlierMeanDCT is almost same. Fig5 | |

| ( ) | Showsthe sample (Owensvalley) Original image, JPEGcompressed image, Proposed compresse dimage |

| (Mean filtered approach). |

| A Critical Performance Evaluation of Classification Methods with Modified JPEG Decompressed | ||||||

| Multiband Images | ||||||

| No. of Bits 559107 357508 357508 No. of Bits 249582 232905 232911 No. of Bits 991131 583964 583964 | 357512 372414 386369 412008 441576 482908 232913 232925 233052 234078 234701 235876 583964 590799 606683 650116 708184 801412 | |||||

| Image Bolivia7 Monolake6 % of Saving % of Saving PSNR % of Loss PSNR 46.608 37.557 37.557 Conventional Mean DCT Outlier Mean DCT (C=3.27) -36.057 36.057 31.4 29.516 29.516 -6 6 -6.681 6.679 % of Loss -19.419 19.419 % of Saving -41.081 41.081 PSNR 28.087 24.942 24.942 Owensvalley5 % of Loss -11.197 11.197 | Outlier Mean 36.056 DCT (C=3.08) 29.516 6 6.678 37.557 19.419 41.081 24.942 11.197 | Outlier Mean 6.673 DCT (C=2.58) 33.391 30.895 26.309 21.021 13.628 Outlier Mean DCT (C=2.33) Outlier Mean DCT (C=1.96) Outlier Mean DCT (C=1.645) Outlier Mean DCT (C=1.28) 29.535 29.556 29.613 29.727 29.998 5.939 5.872 5.691 5.328 4.464 6.623 6.211 5.962 5.491 37.557 37.557 37.558 37.559 37.56 19.419 19.419 19.417 19.415 19.412 40.391 38.788 34.406 28.547 19.141 24.949 24.969 25.034 25.178 25.613 11.172 11.101 10.869 10.357 8.808 | ||||

| No. of Bits No. of Bits 3083120 2077249 2077242 2077250 2124088 2181853 2306572 2464900 2682210 215085 172156 172144 172141 175585 178003 183439 19046 198835 No. of Bits 439079 352864 352873 352899 353207 354337 358195 365769 381841 No. of Bits 478658 346282 346277 346290 346905 348258 354345 370218 403209 | ||||||

| 2 Year | Bolivia1 Total Monolake7 Owensvalley6 | % of Saving PSNR % of Loss % of Saving PSNR % of Loss % of Saving PSNR 39.828 38.134 38.134 -19.959 19.964 19.966 18.364 17.240 14.713 39.785 38.073 38.073 38.074 38.074 38.083 38.101 -4.303 4.303 4.300 4.300 4.277 4.232 -32.625 32.625 32.625 31.105 29.232 25.187 20.051 13.003 11.447 7.555 38.164 38.215 4.074 3.946 34.547 32.625 32.625 32.625 32.633 32.646 32.680 32.755 32.932 -5.563 5.563 5.563 5.540 5.502 5.404 5.187 4.674 -19.635 19.633 19.622 19.557 19.299 18.421 16.696 13.035 38.134 38.134 38.139 38.162 38.235 38.418 % of Loss -4.253 4.253 4.253 4.253 4.240 4.182 3.999 3.540 % of Saving -27.655 27.656 27.653 27.525 27.242 25.971 22.655 15.762 PSNR 35.711 29.586 29.586 29.586 29.593 29.595 29.620 29.825 30.554 % of Loss -17.151 17.151 17.151 17.131 17.126 17.056 16.482 14.440 | 41 Year 013 2 | |||

| XIII Issue XVI Version I ( ) | Monolake1 Monolake2 Total Owensvalley7 Total Owensvalley1 | Bolivia2 Bolivia3 % of Saving No. of Bits 275655 208384 208383 208394 212633 216319 225195 % of Saving -24.404 24.404 24.400 22.862 21.525 18.305 PSNR 37.939 36.872 36.872 36.872 36.88 36.89 36.914 % of Loss -2.812 2.812 2.812 2.791 2.764 2.701 No. of Bits 394036 274432 274432 274430 283304 291242 307235 % of Saving -30.353 30.353 30.354 28.102 26.087 22.028 PSNR 34.539 33.098 33.098 33.098 33.113 33.128 33.185 % of Loss -4.172 4.172 4.172 4.128 4.085 3.920 No. of Bits 410486 329732 329729 329722 330006 331107 336165 344531 361041 236059 250482 14.364 9.132 36.971 37.064 2.551 2.306 325783 351372 17.321 10.827 33.272 33.455 3.668 3.138 % of Saving -19.672 19.673 19.675 19.606 19.337 18.105 16.067 12.045 PSNR 39.871 37.503 37.503 37.503 37.503 37.509 37.537 37.594 37.737 % of Loss -5.939 5.939 5.939 5.939 5.924 5.853 5.710 5.352 No. of Bits 306006 257407 257409 257441 257713 258682 261126 266277 276333 % of Saving -15.881 15.881 15.870 15.781 15.465 14.666 12.983 9.696 PSNR 42.237 40.988 40.988 40.988 40.988 40.994 41.014 41.064 41.178 % of Loss -2.957 2.957 2.957 2.957 2.942 2.895 2.777 2.507 No. of Bits 2749816 2248277 2248254 2248355 2250035 2255578 2279061 2324609 2421179 No. of Bits 708596 449957 449957 449957 458283 498865 536339 595087 -18.239 18.239 18.236 18.175 17.973 17.119 15.463 11.951 PSNR 41.114 38.186 38.185 38.185 38.185 38.190 38.211 38.266 38.408 % of Loss -7.121 7.124 7.124 7.124 7.111 7.060 6.927 6.581 No. of Bits 537518 360478 360483 360486 367990 378051 399052 423410 460890 % of Saving -36.500 36.500 36.500 35.325 33.640 29.598 24.309 16.018 PSNR 30.718 28.584 28.584 28.584 28.62 28.655 28.703 28.801 29.095 % of Loss -6.947 6.947 6.947 6.829 6.715 6.559 6.240 5.283 No. of Bits 4249620 2777127 2775122 2775119 2815187 2881656 3040273 3252354 3584143 % of Saving -32.936 32.935 32.935 31.539 29.667 25.760 21.228 14.255 PSNR 32.756 30.116 30.116 30.116 30.127 30.161 30.224 30.299 30.575 % of Saving -34.649 34.649 34.697 33.754 32.190 28.457 23.467 15.659 PSNR 32.530 29.740 29.740 29.740 29.755 29.778 29.826 29.934 29.974 % of Loss -8.059 8.059 8.059 8.026 7.922 7.729 7.500 6.698 % of Loss -8.576 8.576 8.576 8.530 8.459 8.312 7.980 7.857 | I XIII Issue XVI Version ( ) F Volume | |||

| Bolivia4 Bolivia5 Monolake3 Monolake4 Owensvalley2 % of Saving No. of Bits 623322 393045 393045 393045 398738 411789 % of Saving -36.943 36.943 36.943 36.030 33.936 PSNR 30.959 29.219 29.219 29.219 29.225 29.247 % of Loss -5.620 5.620 5.620 5.600 5.529 No. of Bits 694304 429601 429601 429601 437597 451664 % of Saving -38.124 38.124 38.124 36.973 34.947 PSNR 29.986 28.004 28.004 28.004 28.009 28.023 % of Loss -6.609 6.609 6.609 6.593 6.546 No. of Bits 399635 324167 324170 324158 324460 325220 328846 335993 350594 442420 483673 526620 29.022 22.403 15.513 29.283 29.373 29.652 5.413 5.122 4.221 483706 525250 584315 30.332 24.348 15.841 28.066 28.17 28.489 6.402 6.056 4.992 % of Saving -18.884 18.883 18.886 18.810 18.620 17.713 15.925 12.271 PSNR 40.435 38.850 38.85 38.849 38.849 38.859 38.883 38.952 39.113 % of Loss -3.919 3.919 3.922 3.922 3.897 3.838 3.667 3.269 No. of Bits 366063 300168 300130 300212 300264 300614 303531 309183 322696 % of Saving -18.000 18.011 17.988 17.974 17.879 17.082 15.538 11.846 PSNR 41.097 39.268 39.268 39.268 39.268 39.274 39.295 39.349 39.496 % of Loss -4.450 4.450 4.450 4.450 4.435 4.384 4.253 3.895 No. of Bits 409426 288904 288897 288887 293676 299851 313895 331761 358355 -29.436 29.438 29.440 28.271 26.763 23.332 18.969 12.473 PSNR 35.207 33.626 33.626 33.626 33.638 33.649 33.685 33.749 33.939 % of Loss -4.490 4.490 4.490 4.456 4.425 4.323 4.141 3.601 No. of Bits 558702 371381 371378 371376 377700 388065 410282 438638 479219 % of Saving -33.527 33.528 33.528 32.396 30.541 26.565 21.489 14.226 PSNR 32.590 30.696 30.696 30.696 30.713 30.737 30.785 30.871 31.12 Owensvalley3 % of Loss -5.811 5.811 5.811 5.759 5.685 5.538 5.274 4.510 | obal Journal of Researches in Engineering Gl | |||||

| Owensvalley4 | Bolivia6 Monolake5 % of Saving No. of Bits 321611 242123 242129 242127 243817 246467 No. of Bits 578965 451039 451032 451010 451400 452566 457120 468155 492798 No. of Bits 565589 374161 374168 374159 379834 390527 413718 443804 485971 252569 262095 277678 % of Saving -24.715 24.713 24.714 24.188 23.364 21.467 18.505 13.660 PSNR 37.221 33.598 33.598 33.598 33.598 33.597 33.600 33.612 33.654 % of Loss -9.773 9.773 9.773 9.773 9.736 9.728 9.696 9.583 % of Saving -22.095 22.096 22.100 22.033 21.831 21.045 19.139 14.882 PSNR 37.724 34.999 34.999 34.999 34.999 35.003 35.030 35.115 35.36 % of Loss -7.223 7.223 7.223 7.223 7.212 7.141 6.916 6.266 -33.845 33.844 33.846 32.842 30.952 26.851 21.532 14.077 PSNR 32.645 30.63 30.63 30.63 30.65 30.682 30.729 30.812 31.035 % of Loss -6.172 6.172 6.172 6.111 6.013 5.869 5.614 4.931 | |||||

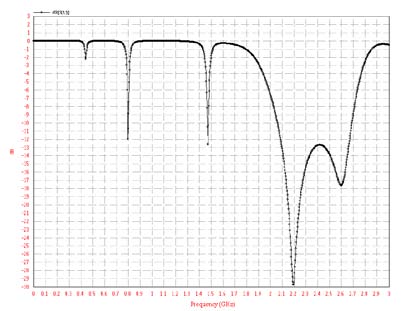

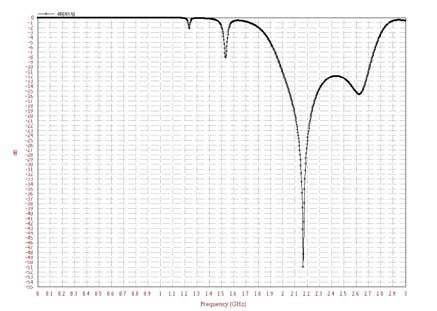

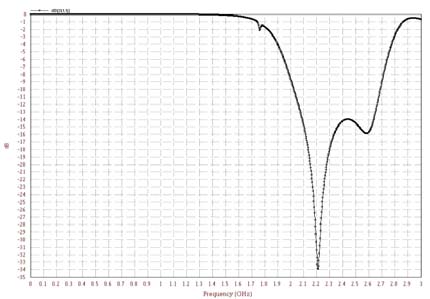

| shows the Compression Benefit and | DCT, Compression Benefits are observed tobe | ||||||||

| PSNR values of Median DCT algorithm Vs | MedianDCT>OutlierMedianDCT(for C=1.28 to 2.58). As | ||||||||

| Median DCT algorithm. With all the images we found | the value of C increases in the Outlier,Compression | ||||||||

| that Median DCT and Outlier Median DCTalgorithms | Benefit increases. For C=3.08 to 3.27 Compression | ||||||||

| have better compression ratios as compared to | Benefit in MedianDCT andOutlierMedianDCT is same. | ||||||||

| conventional JPEG coding. The PSNR loss in Median | PSNR in MedianDCT<OutlierMedianDCT(for C=1.28 to | ||||||||

| DCT and OutlierMedianDCT algorithms is very less as compared to conventional JPEG coding. While comparing MedianDCT and the corresponding Outlier No. of Bits 215085 178234 178240 % of Saving -17.133 17.130 17.129 178242 2.58). Bolivia1 PSNR 39.785 38.432 38.432 38.432 | 181353 183473 188038 194726 204035 15.683 14.697 12.575 9.465 5.137 38.488 38.53 38.624 38.886 39.219 | ||||||||

| % of Loss | - | 3.400 | 3.400 | 3.400 | 3.260 | 3.154 | 2.918 | 2.259 | 1.422 |

| JPEG |

| No. of Bits | 708596 | 492900 492901 | 492901 501063 | 512030 537843 571450 626345 | ||||||||

| Owensvalley7 | % of Saving PSNR % of Loss | -30.718 - | 30.439 30.439 28.639 28.639 6.768 6.768 | 30.439 28.639 6.768 | 29.287 27.740 24.097 19.354 11.607 28.723 28.790 28.917 29.132 29.595 6.494 6.276 5.863 5.163 3.655 | |||||||

| No. of Bits | 4249620 3032712 3032716 3032711 3069953 3130758 3276024 3475985 3793053 | |||||||||||

| % of Saving | - | 28.635 28.635 | 28.635 | 27.759 26.328 22.910 18.204 10.743 | ||||||||

| Year | Total | PSNR | 32.530 | 29.877 29.877 | 29.877 | 29.922 29.978 30.094 30.334 31.004 | ||||||

| 2 | % of Loss | - | 8.155 | 8.155 | 8.155 | 8.017 | 7.845 | 7.488 | 6.750 | 4.691 | ||

| I | ||||||||||||

| XIII Issue XVI Version | ||||||||||||

| ( ) | ||||||||||||

| K | = | e P P e ? ? 0 P 1 | ||||||||||

| Original Image |

| Original Image | |||||||

| Spectral Class | Correct Classification (%) | Number of Samples used | 1 | Classified as group 2 3 | 4 | ||

| 1. Dense scrub | 100 | 500 | 500 | 0 | 0 | 0 | |

| 2. Rock | 95.4 | 500 | 20 | 477 | 3 | 0 | |

| 3. Forest | 100 | 500 | 0 | 0 | 500 | 0 | |

| 4. Open scrub | 100 | 500 | 0 | 0 | 0 | 500 | |

| Misclassification= 1.15 % | Overall accuracy= 98.85 % | Kappa coefficient=0.9846 | |||||

| Conventional JPEG Compression image | |||||||

| Spectral Class | Correct Classification (%) | Number of Samples used | 1 | Classified as group 2 3 | 4 | ||

| 1. Dense scrub | 98.2 | 500 | 491 | 6 | 3 | 0 | |

| 2. Rock | 93 | 500 | 23 | 465 | 12 | 0 | |

| 3. Forest | 96.8 | 500 | 8 | 8 | 484 | 0 | |

| 4. Open scrub | 99.8 | 500 | 1 | 0 | 0 | 499 | |

| Misclassification= 3.05 % | Overall accuracy=96.95% | Kappa coefficient=0.9593 | |||||

| proposed compression image(Mean filtered approach) | |||||||

| Spectral Class | Correct Classification (%) | Number of Samples used | 1 | Classified as group 2 3 | 4 | ||

| 1. Dense scrub | 98.6 | 500 | 493 | 4 | 1 | 2 | |

| 2. Rock | 78.4 | 500 | 81 | 392 | 27 | 0 | |

| 3. Forest | 96.2 | 500 | 18 | 1 | 481 | 0 | |

| 4. Open scrub | 100 | 500 | 0 | 0 | 0 | 500 | |

| Misclassification= 6.7% | Overall accuracy= 93.3 % | Kappa coefficient=0.9106 | |||||

| Original Image | |||||||

| Spectral Class | Correct Classification (%) | Number of Samples used | 1 | Classified as group 2 3 | 4 | ||

| 1. Dense scrub | 97.2 | 500 | 486 | 1 | 0 | 13 | |

| 2. Rock | 71.8 | 500 | 118 | 359 | 5 | 18 | |

| 3. Forest | 100 | 500 | 0 | 0 | 500 | 0 | |

| 4. Open scrub | 100 | 500 | 0 | 0 | 0 | 500 | |

| Misclassification= 7.75% | Overall accuracy=92.25% | Kappa coefficient=0.8966 | |||||

| Conventional JPEG compression image | |||||||

| Spectral Class | Correct Classification (%) | Number of Samples used | 1 | Classified as group 2 3 | 4 | ||

| 1. Dense scrub | 93.6 | 500 | 468 | 4 | 5 | 23 | |

| 2. Rock | 51.2 | 500 | 101 | 256 | 69 | 74 | |

| 3. Forest | 98.6 | 500 | 7 | 0 | 493 | 0 | |

| 4. Open scrub | 100 | 500 | 0 | 0 | 0 | 500 | |

| Misclassification= 14.15% | Overall accuracy=85.85 % | Kappa coefficient=0.811 | |||||

| proposed compression image(Mean filtered approach) | |||||||

| Spectral Class | Correct Classification (%) | Number of Samples used | 1 | Classified as group 2 3 | 4 | ||

| 1. Dense scrub | 91 | 500 | 455 | 5 | 6 | 34 | |

| 2. Rock | 48.8 | 500 | 79 | 244 | 90 | 87 | |

| 3. Forest | 96 | 500 | 18 | 2 | 480 | 0 | |

| 4. Open scrub | 99.6 | 500 | 2 | 0 | 0 | 498 | |

| Misclassification= 16.15% | Overall accuracy= 83.85 % | Kappa coefficient=0.784 | |||||