1. Introduction

he method of checking the fabricated IC's for any wrong behavior due to errors like rational error, holdup error, falsehood faults[1],etc is called VLSI testing [1][2][3].Testing is made by creating and putting test vectors which are a set of binary vectors in to the entry of the circuit. Error is found by checking the outcome for the said test vector with kept answers. High fault coverage is the main problem at the early stage of circuit design and testing. Due the latest emerging technology the focus is more towards the new target which has limited test data quantity, condensed test instant and small control testing etc. All possible errors have to be tested in the circuit. To test all the errors in the complete circuit we may require more test vectors. More than one error can be detected by a single test vector and for a single error more than one test vector can be generated. We must create a set of test vectors so that more number of errors can be covered with smallest amount of test vectors. The control dissipated during the normal mode of operation is much lesser when compared the power dissipated during testing of a circuit. The major cause for this difficulty is superior toggling count of the recall component like flip flops. The main aim of checking of chronological circuit is to know such series of test vectors that have more error coverage and also has an optimized toggling calculation. Reordering the test vectors in the examination sequences is the easiest way to improve the power in testing, by checking this the test vectors are not customized, the test reporting is conserved.

In this paper during digital VLSI circuit testing we used the modern confirmation of the state of art models to improve the power consumption. The major reason for considering power usage throughout test application is that power and energy of a digital system are much higher in the test mode than in system mode [4, 5, and 6]. The motive is that control reduction system mode only starts a few modules where as the test patterns affect as many nodes swapping as probable at the same time. One more reason is the continuous practical contribution vectors functional to a specified circuit has a significant connection, while the connection between the successive test patterns can be very small [7].

2. II.

3. Taxonomy of Low Power Testing a) Problems of VLSI circuit testing in negligible power

The latest improvement of complex, high performance, low power devices put into practice in profound submicron technologies generates a new class of many difficult electronic products, like laptop computers, cellular telephones, audio and video based multimedia products, energy efficient desktop computers. Power management becomes a critical factor which cannot be ignored during test development because of this new class of systems. There are three key factors for checking the control properties of a scheme under test [2]:

? The Switching action created in the circuit throughout test application is directly corresponded by the consumed energy, and during the remote testing this has an effect on the battery life. ? The ratio between energy and the test time gives average power consumption. This factor is of high importance than the energy because hot spots and reliability problems are caused by high power consumption. ? The maximum switching action created in the circuit in test throughout one clock cycle is corresponded by the highest power consumption. The thermal and electrical limits of the mechanism and the system wrapping necessities [3] are determined by peak power. Academic researchers have been carried out on low power design and separately tests have been conducted, during external testing or BSIT the industrial T practices have needed ad hoc solutions for power consumption [8].The solutions include: ? To bear the high current during testing the power supply, ? Package and cooling is over sized.

? Tests are conducted at reduced operational frequency. ? Needs appropriate test planning and partitioning of the system. While working with high density systems like modem ASICs and MCMs, in the design phase we have to assure all the power constraints so that we can conduct a non destructive test. Other than the need for stop the circuit under test (CUT) from destruction we also have to consider other factors for reduced power consumption during test application like price, dependability, presentation confirmation, independence as well as knowledge connected issues. All of these problems are discussed in the sequel.

The effect of strong limit on the energy dissipation is due to the constraints of consumer electronic products that need plastic packages. The CUT requires a highly costly package in effect as a lot of switching action during the test leads to an increased high current flow (an increased peak power).Furthermore, it has been found that wearing away of conductors and ensuing stoppage of circuits is caused by electro migration. Electro relocation rate, prominent temperature and existing thickness caused by extreme switching throughout test application are determined by the main parameters like temperature and current density which drastically lowers the dependability of circuits in test. In circuits equipped with BIST this phenomenon is very severe because these circuits are test regularly, for example in online BIST approach. Due to high activity not only the reliability but also autonomy of remote and portable systems motorized by batteries suffer. Remote systems frequently work mainly of their time in standby state with nearly no power consumption, intermittently by regular self tests. Therefore the lifetime of the batteries is increased by saving power during test mode.

The below two points emphasizes on importance of power minimization problem. Firstly, to get rid of too much of heat produced during test application the present tendency in circuit design towards circuit miniaturization (for portability for example) avoids the use of special cooling equipment. Next, the rising use of speed testing to find slow chips no more allows compensating the raise of power indulgence in test by reduced frequency of testing. Earlier, test was basically applied at rates much lesser that a circuit's normal clock rate because only the treatment of fixed at faults were considered to be important. Currently the test to recognize slow chips via delay testing is done by aggressive timing. The high order for presentation specialized dies contrived for use in MCMs is seen in this fact.

One more factor that demonstrates the criticality of this crisis is that the potential of a circuit in dispersing power is not constantly the same during the test and normal form of function. In practical checking of the die immediately after engraving on the wafer, the exposed die is not yet packed and has very less option for power or warmth indulgence. throughout it normal use, the is die is packed considering the quantity of power that needs to be dissipated and at times has extra options like heat dissipated fins. Therefore, during bare die testing because of the absence of packaging the conventional heat removal techniques cannot be used. The applications based on MCMs technology may have a problem because of this, for example, without the access to the completely tested bare dies we cannot realize the possible advantages in circuit density and performance. The overall yield and increasing production cost is affected if proper care is not taken to lower the power dissipation during bare die, the die in the test may be damaged. There are two other factors encouraging the lessening of power in check is given below. The first one is related to the problems found in testing memories via wafer probes [12].High Switching action during testing causes high power and ground noise which are mainly serious during the wafer probing since the power provide links are pitiable. The logical state of circuit lines are erroneously changes because of the high noise, because of which a few good dies fail the test, causing unavoidable loss of yield.

The next reason is concerned with the problems rising during BIST. External testing becomes very tough with modern technologies and Modem design, a promising solution to VLSI testing problems is emerged through BIST. The design used to test methodologies which aim at finding defective mechanism in a arrangement by adding test logic on the chip is called BIST. The Various advantages of BIST are enhanced testability, at regulator speed test of modules, minimum need for automatic test tools and support during system maintenance [12] [13].Furthermore, with the upcoming core based "system-on-a-chip" designs, BIST has always been one of the most good testing methods as it helps to maintain the rational property of the design. In BIST, the creation of the patterns are generally got from the Linear Feedback Shift Register (LFSR), the advantage of this is compact size and the ability to work as a Signature analyzer too. Unluckily there in increase in total energy consumption because of the excitation from the LFSR therefore achieving acceptable coverage levels require larger test lengths. The autonomy of portable equipment is reduced with increase in energy consumption, mostly in application where periodical tests are applied. With this when the test vectors are used with supposed process occurrence, the normal power indulgence maximizes when compared to the power indulgence in the ordinary mode. This is because of the fact that when a given circuit in its normal mode of operation is applied with continuous functional input vectors has significant correlation; whereas consecutive vectors of a series created by an LFSR is proven to have less correlation [15]. This would compel the use of special packages and cooling systems to maintain the thermal conditions under specifications, therefore the final product cost is increased. It is very significant to lessen the power/energy indulgence in testing in order to assure the cost, performance verification, and autonomy and reliability constraints.

Let's look into another point related to scan supported BIST. Scan supported self test architectures are extremely famous because of it less impact on performance and area [14].Considering small power, scan supported architectures are incredibly costly because of every test outline is escorted with a alter operation with elevated power utilization to supply test model and check the test reply. This is popular in industrial surroundings. To limit the energy indulgence when scan shifting it had to convene the specific power restrictions throughout test mode and shun system destruction.

4. b) Low Power Testing Strategies

The power degenerates in a CMOS circuit come because of indict and release of capacitances in switching. To clarify this power indulgence through test, let us believe a circuit made up of N nodes, and a test succession of length L used to the circuit inputs. The regular power used at nodule i per switching is ½. i C .

2 dd V Here i C is the equal productivity capacitance at nodule i and 2 dd V the energy supply voltage. A highquality estimate of the energy used at nodule in a time interval t is ½. i S . 2 dd V , here i S is the regular number of changes during this period (also known as the switching movement factor at nodule i). Moreover, nodules linked to additional one logic gate in the circuit are nodules having a elevated productivity capacitance. Depending on this reality, and in a primary estimate, it can be said that productivity capacitance i C is relative to the fan out at node i , named as i F [Wang 1995]. Thus, an estimation of the energy i E used at node i throughout the instance interval t is given by:

2 0 1 . . . . 2 i i i dd E S F C V = 0C is the least productivity capacitance of the circuit. As to the appearance, energy utilization at the logic stage is a task of the fan out i F and the swapping task factor i S . The fan out is shown by circuit topology, and the action feature i S can be checked by a judgment simulator. The product . i i S F is called weighted switching action (WSA) at node i and shows the only changeable part in the power used at nodule i in test application.

Based on the formulation above, the energy used in the circuit following function of a pair of succeeding input vectors 1 ( , )

k k V V? can be articulated by:

( ) i 2 Vk 0 dd i i 1 E = .C .V . S k .F 25. ?

Here i ranges from corner to corner all the nodules of the circuit and ( ) i s k is the quantity of changeover initiated by k V at node i . Currently, let us think the full test sequence of length L needed to reach the plan fault action. The whole power used in the circuit after the function of the whole test series is shown below; here k ranges from corner to corner all the vectors of the test series.

( ) i 2 total 0 dd i i 1 E = .C .V . S k .F 2 ?By clarity, energy is specified by the ratio linking power and occasion. The immediate power is usually estimated as the quantity of energy needed through a tiny instant of time t small such as the piece of a clock cycle instantly subsequent the system clock increasing or declining edge. Therefore, the immediate energy degenerate in the circuit after the submission of a test vector is shown by: ( )

P V =E /t k Vk small instThe peak power communicates to the greatest value of immediate energy checked during test. Consequently, this is shown in terms of the maximum energy used in a low moment of time in the test session:

( ) P ( / ) peak k inst k k Vk small Ma P V Ma E t = × = ×At the end, the average power used in the test session could be checked from the whole power and the analysis time. Taking into account that the analysis time is said by the product L.T, where T matches to the supposed clock period of the circuit, the regular energy can be shown as follows: P / ( . )

average total

6. E L T =

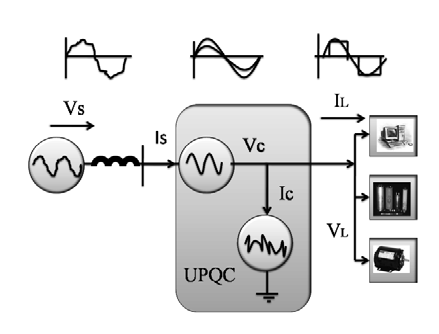

Though the expression on power and energy stated above is based on a simplified model, it is precise enough for the planned use of power analysis during test. Based on the above expression and considering that a given CMOS technology and a provided voltage for the circuit, it seems that the switching action factor is the only factor that has force on the power, peak power and average power. This tells us that most of the methods suggested till now for decreasing the power and energy during the test are based on the lowering of the swapping action aspect. c) Low power external testing usimg Automatic Test Equipment Figure 1 : Basic principle of external testing using ATE Since the Design complexity of state of art VLSI circuits, automation is majorly considered for creating test process. The fundamental code of exterior testing using automatic test apparatus with the three basic components is shown in Figure 2: manufacturing defects, for integrated circuit parts are tested under this circuit; automatic test equipment (ATE) including control processor, timing module, energy module, and format module; ATE memory that gives analysis model and checks test responses. The summary of the previously outlined components are presented below [16].

The tests are applied to the circuit under test (CUT) which is piece of silicon wafer or packaged apparatus to detect manufacturing defects. Since checking will join and separate millions of pieces to ATE to independently test every part so the links of the CUT pins and bond pads to ATE has to be robust and easily changeable.

Control processor, timing module, power module and format module are there in the ATE. Control processor is a swarm computer which manages the flow of the test procedures and corresponds to the other ATE modules even if CUT is non faulty or faulty. The clock edges required for every pin in the CUT is defined by the timing module. The signal to the pin will go high or low based on the test model information with timing and format in sequence that is extended by the format module, the power supply to CUT is given by power module and it is accountable for exactly calculate power and voltages.

The ATE memory has test patterns given to the CUT and the anticipated non faulty responses are evaluated with the definite answer when testing happens. Voltage reply with mille volt correctness at a timing precision of hundreds of picoseconds is measured by State of art ATE [16]. Automatic test pattern generation (ATPG) algorithms are used to get test patterns or test vectors kept in ATE memory [17]. All through this paper from now on the test model and test vectors are made use of randomly. Random and deterministic algorithms are the classifications of ATPG algorithm. Random ATPG algorithms involve creations of random vectors and the test efficiency is got by error reproduction [24]. ATPG algorithms create tests by doing a structural net list at the logical level of concepts using a particular error list from a error world. Deterministic ATPG algorithms generate smaller and superior value tests in stipulations of test effectiveness when compared to Random ATPG algorithms, at the cost of extended computational time. Low controllability and capacity to scrutinize the inner nodules of the circuit are caused by the increased computational time related with deterministic ATPG algorithms. For sequential circuits this problem is extra severe in spite of the latest advancements in ATPG [18] computational time is huge, and test efficiency is not adequate. Additionally, the problem of getting increased test efficiency is very difficult and time consuming because of the increasing difference among the quantity of transistors on a chip and the inadequate input/output pins.

The technique that improves the testability, in conditions of controllability and capacity to observe, by including the test hardware and introducing particular test leaning decisions in the VLSI design flow is called Design for testability (DFT) as seen in Figure 1. This frequently results in smaller test application time, increased fault coverage and hence tests efficiency and easier ATPG. Scan based DFT is the most general DFT methodology wherever chronological basics are adapted to scan cells and initiated into a linear shift register. This made by keeping a scan mode for every scan cell where the information is not put in equivalent from the combinational part of the circuit, because it is shifted in order from the earlier scrutiny cell in the change register. Scan depending on DFT is additionally separated into entire scan and partial scan. The major benefit of full scan is that by changing all the chronological elements to scan cell that lessens the ATPG difficulty for chronological circuits to the additional computationally tractable ATPG for combinational circuits. In contrast, partial scan changes the subset of sequential elements going to lower test area slide at the expenditure of many complexes ATPG. How the test patterns are applied to CUT is described by the introduction of scan based DFT which leads to the change of the test application strategy. A test pattern is applied to each clock cycle in the case of amalgamation circuits or non scan series circuits, Every test model is used in a scan cycle when scan based DFT is used.

7. d) Low-Power Scan Testing

The problem of too much power during that test that is in the context of scan testing is much harsh than in practical mode. This is mainly due to the fact that application of every test model in a scan plan needs a quantity of shift clock cycles that add to an needless raise of switching activity [19] [20]. A study reported in [21] expresses that when 10% 20% of the memory fundamentals (D flip flops and D latches) in a digital circuit modify state in one clock cycle in functional mode, 35% 40% of such memory elements while reconfigured as scan cells can swap state in scan testing. All scan cells can change state. One more report [22] further indicates that the power used during the functional operation is 3 times lesser than the power used in scan testing, in normal functional operation the power is 30 times lesser when compared to the peak power.

Scan design needs reconfiguration of memory elements (regularly D flip flops) in scan cells and next joining them jointly to create scan chains [19] [23] [20]. Every scan test pattern has to be first moved into the scan chains during slow speed testing. To do this we need to set the scan cells to shift mode and putting a quantity of load/unload (shift) clock cycles. In order to avoid high power dissipation scan shifting is usually done at low speed. To incarcerate the test reply of the plan into scan cells capture clock cycle is used. To do this we need to set the scan cells to normal/capture mode. For this setting generally a scan enable signal (SE) is used. The scan design is in shift mode when SE is set to 1 and when it is set to 0, the circuit is moved to normal/capture mode.

From the early 1990s, in the industry to check stuck at faults and bridging faults this slow speed scan test method has been extensively used. The Figure 7.2 shows the shift and capture operations for the classic scan design with the connected current waveform for every clock cycle. This current waveform changes from cycle to cycle since current is relative to the number of 0-to -1 and 1-to-0 changeover on the scan cells which in turn makes swapping in the circuit under test (CUT).

The excessive power during (slow-speed) scan testing can be divided into two sub problems: extreme power in the shift procedure (known as extreme shift power) and extreme energy in the capture procedure (known as extreme capture power) [Girard 20021]. When a lot of flip-flops change their output values at the same time after capture operation the latter is proposed to deal the issues that raises during the clock skew. Many methods have been intended to decrease shift power, capture power or both simultaneously during slow speed scan testing since the last ten years.

8. e) Low power testing in BIST

Lately new methods have come to handle with the energy and power issues in BIST. To shorten the BIST implementation of tough IC's, particularly during higher levels of test activity Zorian [25] offered a distributed BIST control scheme. When the average power is decreased as a result the temperature associated issues are avoided by the amplifying the check time limit. On the flip side the self-sufficiency of the system is not increased and the total energy remains the same.

Cheung et al. in [26] suggested a technique for the low power test of RAMs. It reduces the energy usage which conserves the test time; the average power is also reduced.

Based on the two different speed LFSRs [27] Wang et al. suggested a BIST strategy called Dual Speed LFSR. They attain a reduction in the regular energy and power usage between 13% and 70 % with no error of mistake treatment.

Lately, Hertwig et al [28] suggested a reduced power strategy for scan based BIST. Energy saving ranged from 70% to 90% evaluated to standard scan based BIST architecture is got from this original design of scan path elements. Zhang et al. in [29] suggested another low power method for scan -based BIST.

The issue of power limitation in test application for BIST circuit is also seen in [30]. The major restriction is to decrease the energy consumption with changing the stuck at fault coverage. It is seen that energy consumption is not influenced by polynomial selection, regarding energy consumption seed of the LFSR is the major important factor. Thus, a technique based on simulated annealing algorithm is suggested to choose the seed of a known LFSR that gives the least power usage. The untried results collected on the ISCAS benchmarks display difference if the weighted swapping action starting from 147% to 889% based on the selected LFSR seed.

A test vector inhibiting method to sort the nondetecting sub sequences of a pseudo random test order created by a LFSR is shown by Girard et al. in [31]. To accumulate the initial and final vectors of the non detecting sub sequences to b filtered is done by using the decoding logic To carry out a discerning filtering action a D type flip flop functioning in the snap style is made use of to change the enable/disable mode of LFSR. Decrease in the energy consumption is in the choice from 18% to 78% with a small cost in conditions of region slide of the BIST structure (below than 3% of the circuit area). Manich et al. in [32], suggested a enhancement method where the filtering action is complete to all the non-detecting subseries. The main thought is based on the amalgamation of all the deciphered logic modules. For some of the experimented benchmark circuits Energy and average power usage savings can reach a level of 90%.

In [33], a original low power/energy BIST approach based on circuit partitioning is suggested. The aim of this approach is to reduce the average power and energy usage during pseudo-random testing and to decrease the peak power usage without changing the fault coverage. The main idea is to divide the actual circuit into two structural sub circuits so that every sub circuit can be sequentially tested through two various BIST sessions. The average power of the swapping action in a instance time (i.e. the average power) as well as the peak energy usage is reduced when dividing and planning the test session. Furthermore, the total energy usage during BIST is also decreased because the test length needed to check the two sub circuits is not so far from the experiment length for the actual circuit. By slightly modifying conventional TPG structures the suggested approach can be put to whichever test-perscan or test-per-clock BIST schemes. Results on ISCAS circuits presents that regular energy lessening of up to 62%, high power decrease of up to 57%, and power decrease of up to 82% can be got at very less price in conditions of vicinity slide and with approximately no fine on the circuit with regards to timing.

9. III.

10. Current State of the Art

The key idea for taking into account power usage in test application is that energy and power of a digital scheme are generally supreme in test mode than in system mode [34] [35] and [36]. The motive is that test models result as much nodes switching as possible but a energy saving system mode only triggers some modules at the identical time. One more motive is that succeeding practical input vectors put to a said circuit during system mode has a important connection, but the connection among successive test models can be very less [37].

In the last decade [-94, 116] there was extensive research done on less power model and testability of VLSI circuits. With the introduction of profound sub-micron technology and tense acquiesce and consistency restraint in order to execute a nondestructive test for supreme show VLSI circuits energy indulgence in test application should not to surpass the energy restraint set by the energy degenerate when the practical process of the circuit [52], [60], [69], [103], [111], [125]. This is because unnecessary energy indulgence in the test application that happens by elevated swapping action may cause the next two problems [69], [118] [118]. Also electro migration rate goes high with heat and present compactness that is not estimated by state of the art approaches [82], [117] a) The algorithms [42], [48], [81], [85], [108], [113], [115], [122] synthesizes the low power combinational circuits that look to optimize the signal or change probabilities of circuit nodes making use of the spatial addiction in the circuit (spatial connection), and presumptuous the change probabilities of first inputs to be known (temporal connection) [99]. The utilization of spatial and temporary associations in the practical process for less energy mixture of combinational circuits causes elevated swapping action through test application as connection between consecutive test patterns created by repeated test model creation (ATPG) algorithms is very less [109]. This is since a test pattern is created for a said goal error devoid of any thought of the earlier test model in the test series. As a result, increased switching activity is caused by lower connection between successive test patterns during test application and therefore energy indulgence when evaluated to practical process [126]. b) State job algorithms that use state change probabilities [46], [47], [49], [55], [98], [108], [116] synthesize the low power sequential circuits. The state changeover likelihood are calculated considering the key in likelihood allocation and the state changeover graph that are suitable in functional operation. When scan DFT technique is used these two hypotheses are not suitable in the test mode of operation. Whilst changing the test results, the scan cells are linked to uncorrelated values that destroy the connection between successive functional states. Moreover, in the case of data lane circuits with huge amount of states that are created for less energy making use of the relationships among information transfers [53], [84],

[86], [87], [88], [89], [90], in the test manner scrutinize registers are linked uncorrelated values that are not at all get to useful process that may cause supreme energy indulgence in the efficient operation.

Design methods at the register-transfer stage of concept causes elevated energy indulgence in test application due to the following. Power aware architectural choice such as power managing where blocks are not concurrently activated during functional operation [45], [90] uses systems which include a elevated quantity of memory fundamentals and multifunctional implementation units. Therefore, motionless blocks do not add to extravagance in the realistic procedure. The innovative standard for energy association is the systems and their work understanding varied workload in the practical process [44]. Nevertheless, such an supposition is not suitable in test application. To decrease test application time when the system is in the test mode, simultaneous implementation of tests is needed. Thus, by concomitantly implementing tests a lot of blocks will be energetic at the identical time heading to a difference with the energy management strategy. This will cause elevated power indulgence in test application in contrast to practical process.

To overcome the low connection between repetitive test vectors in test application in combinational circuits a new ATPG tool [126] was suggested. In spite of having, the objectives of protected and low-cost testing of low power circuits the method in [126] maximized the test application time. A special method for decreasing energy indulgence in test submission in combinational circuits is based on test vector order [61], [63], [72], [74], and [75]. The fundamental thought ahead of test vector order is to get a new array of the set such that connection between consecutive test patterns is maximized.

The severity of the test vector-ordering problem that is minimized to get a minimal cost Hamiltonian path in a full, undirected, and weighted graph due to the working time in [61] is very high. The elevated calculating time is triumphed over by the methods suggested in [63], [72], [74] where test vector order assumes a high relation among swapping action in the circuit under test and the hamming space [63], [74] or changeover density [72] at circuit primary inputs. For joined circuits employing BIST many methods for reducing power dissipation were planned newly [50], [57], [58], [71], [73], [95], [96], [97], [120], [123], [124]. In [120] the usage of two speed linear response shift register (DS-LFSR) lessens the changeover compactness at the circuit inputs causing reduced power indulgence. The DS-LFSR function with a unhurried and a standard speed LFSR, in order to amplify the association among successive patterns. It has to be known that the slow LFSR has both a unhurried clock and a standard clock as inputs; it also has a control signal that chooses the suitable clock based on the process. It was shown in [120] that test competence of the DS-LFSR is elevated than in the case of the LFSR based on a primal polynomial with a fall in energy indulgence at the cost of much complex control and clocking. Best influence sets for entry signal allocation are dogged in order to reduce average power [124], the best early circumstances in the cellular automata (CA) cells used for model creation [123] found when the peak power is reduced. It was confirmed in [50] that all the ancient polynomial LFSR of the identical size, generate the similar energy indulgence in the circuit under test, therefore recommending the use of varied answer based on reseeding LFSRs and test vector restrained to filter a couple of non-detecting subsequences of a pseudorandom test series was planned in [-68, 70]. A sub-sequence is non-detecting if all the faults originate by it are also seen by additional notice sub sequences from the pseudorandom test series. An improvement of the test vector restraining methods was shown in [73], [95], [96], [97] where all the non-detecting sub-sequences are removed. The fundamental standard of filtering nondetecting series is to used for decoding judgment to find the primary and the final vectors of every nondetecting series. Later finding the initial vector of a nondetecting series, the restrained structure making use of a broadcast gates network facilitating signal spread [104], stops the submission of test vectors to the CUT. The seed memory is made of two parts which is the exact feature of the proposed BIST structure: the first part has seeds for arbitrary prototype challenging errors and the second part has seeds to inhibit the nondetecting series [71]. The seed memory joined with the interpreted logic is enhanced than only decoding logic in terms of less energy indulgence and elevated error treatment, at the cost of higher BIST area overhead.

For filtering non-detecting vectors motivated by the pre-computation structural design [39] is shown in [58] in different approaches. The MASK block is a circuit with a latch-based architecture or AND-based architecture which also removes or keeps unchanged the vectors made by the LFSR. The facilitate logic trappings a partly specific Boolean function whose onset [62] is the set of the unchanged vectors and whose off-set is the set of the removed (non-detecting) vectors [58]. A progress in area overhead linked with filtering non-detecting vectors without fine in error coverage or test submission time was got making use of non-linear hybrid cellular automata [57]. The hybrid cellular automata creates test models for the CUT making use of cell configurations optimized for less energy indulgence under set error treatment and test application time constraints. The promptness of multiplier units and linear sized test set needs reaching elevated mistake treatment guide to competent low power.

BIST implementations for information trail [43], [76], [77], [78], [80]: In spite of the accomplishment kind of the test pattern producer, in terms of power dissipation [106] BIST architectures extensively differ one from another. The three diverse architectures were checked for energy indulgence, BIST area transparency and test application time. It was seen [106] that the architecture having an LFSR and a transfer register SR creates lower energy indulgence, BIST area transparency and test relevance time when evaluated to a single LFSR and two LFSRs with mutual feature polynomials. Though, this is achieved at the cost of subordinate error treatment and therefore decreased test competence owing to the customized series of model practical to the CUT that does not find all the accidental model unwilling faults.

The power dissipation as described in [70] is influence by circuit division into sub-circuits and cognized sub-circuit. Test development have the major reason for circuit partition is to get two diverse structural circuits of roughly the same size, so that every circuit can be sequentially experienced in two diverse sittings. To reduce the BIST area transparency of the ensuing BIST scheme, the quantity of associations among the two sub-circuits has to be as less as possible. It was revealed in [70] that by dividing a single circuit unit into two sub-circuits and implement two succeeding tests, reserves in energy indulgence can be got with approximately the similar test application time as in the case of a single circuit unit.

Even though the methods planned for reducing energy indulgence in test application in combinational circuits at the judgment stage of concept gives good results, they can additionally be joined with the methods proposed at register-transfer level.

A test pattern creation method for less energy indulgence was projected in [56] to reduce energy indulgence in non-scan chronological circuits in test application. The technique is developed on three self-regulating steps including unnecessary test model creation, power indulgence size and optimum test series assortment. The explanation suggested in [56] that is based on genetic algorithms gets significant savings in energy indulgence, that cannot be realistic to scan sequential circuits anywhere variable energy extravagance is the major contributor to the whole energy indulgence.

To reduce shifting energy indulgence in scan in order circuits, test vector inhibiting methods suggested for combinational circuits are comprehensive to scan in order circuits [59]. Whether the examination example to be shifted fit in to the subset of notice sequences is detected based on the substance of the LFSR the decoding logic. If the model is non-detecting the dissemination throughout the SR and scan chain is blocked. In [68] the test vector restrained method is complete where the modules and modes with the maximum power indulgence are recognized, and gating logic is initiated to lower power dissipation. In spite of considerable savings in energy indulgence vector recognition and gating logic begin not only important area overhead but also significant presentation degradation for changed scan cell design. In [114] a fresh scan BIST arrangement was suggested that A very elevated fault coverage can be got by a small number of clusters of test vectors based on the experimental observation in [114] a new scan BIST structure. Even though not targeted particularly for small energy pattern that can also cause less energy indulgence. A same method is in use in the little changeover random test model creator (LT-RTPG) projected in [121], where adjacent bits of the test vectors are allocated the same values in most test vectors. A straightforward and quick way to compact scan vectors with achievable devoid of more than energy indulgence was projected in [110]. All the preceding scan-based BIST techniques [59] [68] [110] [114] [121] initiate test area overhead and/or additional show degradation when compared to scan DFT techniques.

A diverse method [61] based on test vector and scan cell order reduces energy indulgence in full scan chronological circuits devoid of any overhead in test area or show deprivation. The input series at the main and pseudo inputs of the CUT, though uneven out test reply in the case of regular scan design is considerably customized when reorder scan cells and test vectors. The new series got after reorder will direct to lower switch action and therefore lessen energy indulgence due to superior connection between successive pattern at the main and pretend inputs of the CUT. Additional benefit of the post-ATPG method projected in [61] is that lessening of energy indulgence in test application got devoid of any cut in error treatment and/or augment in test application time. The method is test set dependent, which means that energy limit depends on the size and the worth of the test vectors in the test set. Due to its test set belief, the method planned in [61] is computationally not feasible because of big computational time needed to discover the huge design space.

A diverse method to get energy reserves is the use of extra key input vectors, leads to additional volume of test data [-88, 189]. The method planned in [119] exploits the unneeded in sequence that comes during scan shifting to reduce switch action in the CUT. Though shifting out the pseudo output part of the test reply in the clock cycles, the price of the main inputs is superfluous. Thus this superfluous data can be broken by compute an additional main input vector for every clock cycle of the scan cycle of each test pattern. On the other hand, in spite of getting considerable power savings the methods needed huge test request time, which is linked to a extensive computational time, and a big quantity of test data. The volume of test data is reduced in [79] where a D-algorithm like ATPG [38] is created to create an only control vector to mask the circuit action while variable out the test responses. Nothing like the method planned in [119] based on a huge number of additional main input vectors, the explanation presented in [79] employ a single extra chief input vector for all the clock cycles of the inspect cycle of each test pattern.

The input control method projected in [79] can additional be mutual with earlier projected scan cell and test vector order [61] to realize, though, modest savings in power dissipation in spite of a considerable decrease in volume of test data when compare to [119]. On the other hand, both methods based on extra main input vectors [-88, 189] needs elevated computational time and therefore are infeasible for great chronological circuits. In spite of their effectiveness for reducing energy indulgence in scan chronological circuits, the previous methods trade off one test parameter at the advantage of an additional test parameter of the scan based DFT technique. Thus new methods are needed for small to average sized and big scan serial circuits.

To defeat the difficulty of high power indulgence in test application at RTL, frequent power-constrained test preparation algorithms were projected under a BIST environment [51], [54], [91], [92], [93], [100], [101], [102], [105], [107], [125]. The method in [125] schedules the tests under power constraint by assemblage and order based on floor plan in sequence. An additional investigation in the explanation space of the development difficulty is provide in [54] where a provide distribution graph formulation for the test expansion complexity is given and tests are scheduled concomitantly devoid of more than their power restraint during test application. To shorten the preparation difficulty the bad case power indulgence (maximum immediate power indulgence) is used to distinguish the energy limitation of every test. The test compatibility graph is annotated with energy and test application time information.

The power rating characterized by high power dissipation and test application time are used for scheduling unequal length tests under a power constraint. To conquer the recognition of all the cliques in a graph and the covering table minimization trouble applied in [54], which are well known NP-hard troubles, the solutions planned in [100], [101], [102] use list scheduling, left edge algorithm and a hierarchy growing method as an heuristic for the block test preparation difficulty. Power forced test preparation is comprehensive to system on a chip in [51], [105], [107]. Test communications and power unnatural test preparation algorithms for a scan-based architecture are accessible in [91], [92], [93].

All the earlier methods for power controlled test preparation have unspecified a fixed amount of energy indulgence associated with each test. This is an positive supposition that is not applicable when employ BIST for RTL data paths calculated for less energy due to ineffective energy indulgence.

Observation: The majority of the previous study in less energy testing is alert on scan-based circuits as whole-scan is the extensively adopt test approach in the industry. In a full-scan circuit, all the internal flip-flops (FFs) are replacing by scan-FFs and work in two modes competent to control/observe all the inside memory elements. In capture mode, scan-FFs work as functional FFs such that the test stimuli are applied to the combinational section of the circuit and the test response are stored into these FFs themselves in the next clock cycle. It is probable that the test power usage exceeds the circuit's energy ranking in both shift mode and confine mode. Methods based on scan chain management (e.g, [127][128][134] [139]) are very efficient in dropping scan shift power, but does not help in lessening scan capture power. There are also some other methods that decreases the switch actions of the CUT by captivating benefit of the 'don't-care' bits in test cubes, e.g., the low-power routine test pattern creation (ATPG) methods in [129][137] and the test vector treatment techniques in [130] [133] [135] [138]. A few of them are able to decrease scan shift power whilst the others are supportive in decreasing scan capture power. Yet after apply the above methods, it is potential that there remain some patterns that exceed the circuit's power rating if the CUT is large. One answer in this case is to replay low power ATPG for the faults that were found by those problematic patterns [136]. On the other hand, even if such ATPG tools are available, they usually result in bigger test data volume and are computationally very costly. Another solution is to partition the original circuit into multiple sub-circuits and test them separately through clock gating [131]. This not only significantly reduces the power used in the logic part, but also decreases the power used in clock tree, which is a major contributor to test power consumption. Partitioning the circuit for test, nevertheless, frequently involve rerunning the lengthy ATPG procedure for the partition sub-circuits and solve the difficulty of how to get satisfactory responsibility coverage for the glue logic between sub-circuits. An motivating question is whether partition for test can be done with no mention the said limitations?

Regular power usage during scan testing can be managed by minimizing the scan clock occurrence, a well known explanation used in industry. In difference, peak power usage during scan testing is selfdetermining of the clock occurrence and therefore is greatly tougher to control. Among the power-aware scan testing methods shown, some of them relate openly to peak energy. As said in new industrial experiences [140], scan pattern in various designs may use greatly more peak power over the regular mode and can answer in failures in manufacturing test. For example, if the immediate energy is high, the temperature in some part of the die can go beyond the boundary of thermal ability and then reason immediate injure to the chip. In practice, demolition really happens when the immediate power exceeds the high power grant during many succeeding clock cycles and not just during in one single clock cycle [140]. Thus, such temperature-related or heat indulgence issue speak about more to important regular power than peak power. The main issue with extreme peak power concerns yield decrease and is made clear in the follow-up.

With elevated speed, too much peak power in test reason high rates of current (di/dt) in the energy and ground rails and therefore leads to high energy and ground noise (VDD or Ground bounce). This may incorrectly modify the logic state of some circuit nodes and reason some superior dies to fail the test, therefore leading to needless loss of yield. Likewise, IRdrop and crosstalk things are occurrence that may explain up an error in test mode but not in efficient mode.

IR-drop refers to the quantity of reduction (increase) in the power (ground) rail voltage due to the confrontation of the devices among the rail and a node of attention in the CUT. Crosstalk relates to capacitive coupling between neighboring nets within an IC. With high peak power demands during test, the voltages at some gates in the circuit are minimized. This causes these gates to display superior delays, probably foremost to test fails and yield loss [10]. This experience is reported in many reports from a mixture of companies, in particular when at-speed changeover delay testing is done [140]. Typical example of voltage drop and ground rebound responsive applications is Gigabit switches contain millions of logic gates.

Methods based on scan chain handling [141] are very efficient in minimizing shift power, but generally do not help in falling detain power. In particular, the scan chain segmentation method is extensively utilized in the industry due to the fact that it is easy to execute and highly effectual in reducing shift power.

Several research groups have also proposed minimizing test power by modifying the circuit under test. This includes clock gating [142], insert circuitry among the scan chains and the combinational portion of the CUT to block changeover [143][144][145], scan enable disable [146] and circuit virtual partitioning [147]. Circuit alteration methods are able to minimize both shift power and capture power, though, frequently at a higher design-for-testability (DFT) cost.

When compared to the above DFT-based methods, minimizing test power during successful test scheduling and/or test cube treatment methods does not incur any DFT overhead.

Power-constrained test scheduling is frequently conducted in core-based testing, in which we cautiously select surrounded cores that are tested concurrently according to a agreed energy resources [148]. Often times only a little bits in a test model are necessary to notice all the fault enclosed by it; while the lingering bits are "don't-care bits" (also known as X-bits). here are also many methods that minimizes the switch behavior XIII Issue VII Version I 2 ( ) Year of the CUT by captivating improvement of this property, e.g., the low-power routine test model creation (ATPG) methods in [149][150] [151], test compression approach in [152], and the assorted X-filling methods projected freshly in [153] [154][155] [156]. The test cubes may have as much as 95%~98% X-bits [157]. They can be generously filled with moreover logic '0' or logic '1' devoid of affecting the CUT's fault reporting. Low power X-filling methods use this quality to accomplish shift power and/or capture power decrease. As a absolutely software-based solution, these X-filling method do not initiate any DFT overhead and therefore are simply integrated into any test flow. It must also be noted that, even if the known test cube is fully particular, the don'tcare bits can motionless be known with method such as the one projected in [158]. Filling X-bits to minimize scan shift power is to create less difference among adjacent scan cells.

Observation : It is shown in [153] that logic value difference occur in diverse position have diverse impact on the shift power indulgence. Filling X-bits to minimize capture energy is very diverse from the above. The aim is to minimize the hamming distance flanked by the input and output of each scan-FF in capture mode (denoted as the scan capture transition count), which is revealed to be closely connected with the circuit's switching activity. In [154], Wen et al. first existing low-capture power X-filling techniques (denoted as LCP-filling) in the literature. Their method tries to minimize the scan capture transition count as much as possible by filling X-bits one by one through implication and line justification ATPG procedures. The filling order of the X-bits extensively affects the results of their method and one of the main boundaries of their technique is that they try to lessen changeover in a single scan cell in every filling step, devoid of considering its effect on the other X-bits. To minimize the computational complexities of the ATPG measures utilized in [154], Remersaro et al [155] introduced a probability-based X-filling method (namely preferred fill) to decrease capture energy. On the other hand, their technique is not capable to weight X bits on capture power reduction efficiencies to get the optimal filling order among them. Because of their diverse objectives, low-shift power X-filling method may result in higher capture power indulgence, and vice versa. As a consequence, it is essential to consider both shift power lessening and capture power reduction during the Xfilling development. [156] Takes a fully individual test set as input and generates a new test set with summary shift power and capture power. The authors first identify X-bits in the test set and then fill 50% of the X-bits using preferred fill [155] while the outstanding X-bits are filled next using adjacent fill [153].

Both shift power and capture power can be lessen with X-filling procedure in [156], filling half of the X-bits for capture power minimization and the other half for shift power minimization is not a very good approach. This is because; the shift power indulgence and the capture power indulgence should be dealt with another way. The major purpose in shift power lessening is to reduce the average test power indulgence as much as promising, so that we are able to use increased shift frequency and/or higher test parallelism to minimization of the CUT's test time and hence cut down the test cost; while the main duty in capture power minimization is to keep it under a safe peak power limit and it is needless to minimize it to be the least value.

Test during burn-in at the wafer level augment the repayment that are resulting from the burn-in procedure. The monitoring of de-vice responses while be relevant apt test stimuli during WLBI leads to the easier recognition of faulty devices. This process can be referred as "WLTBI"; it is also referred to in the literature as "test in burn-in" (TIBI) [159], "wafer-level burn-in test" (WLBT) [162], etc. WLTBI technology has lately made fast advances with the arrival of the "known good die" (KGD) [163], i.e., devices that are sold as tested bare die. KGDs are construction blocks of complex systemin-package (SiP) de-sign, wherever chips with various functionalities are united in a single package. The mounting order for KGDs in complex system-on-a-chip (SoC)/SiP architectures, multichip modules, and stacked memories, show the significance for cost effectual and feasible WLTBI solutions [160]. WLTBI will also make possible advance in the manufacture of 3-D ICs, where exposed dies or wafers have to be checked prior they are perpendicularly stacked. WLTBI can therefore be seen as an facilitate technology for cost competent manufacture of reliable 3-D ICs. The vital methods used for the testing and burn-in of entity chips are the similar as those used in WLTBI. Test and burn-in need the accessibility of fitting electrical excitation of the device/die under test (DUT), irrespective of whether it is done on a package chip or a bare die. The only dissimilarity lies in the mode of delivery of the electrical excitation. Mechanically contact the leads provide electrical unfairness and excitation during conservative testing and burn-in. In the case of WLTBI, this excitation can be provided in any of the following three ways: the probe-per-pad method, the sacrificial metal method, and the built-in test/burn-in method [164].

The built-in test/burn-in technique involves the use of on-chip design-for-test (DFT) communications to get WLTBI. This method allows wafers to undergo fullwafer contact using far fewer probe contacts. The existence of complicated built-in DFT features on current day ICs makes "monitored burn-in" possible. Monitored burn-in is a process where a DUT is provided with input test patterns; the output responses of the DUT are monitored online, thereby leading to the identification of failing devices. It is therefore clear that WLTBI has a important possible to lower the generally product cost by flouting the barrier among burn-in and contact during burn-in and also provide test monitoring capabilities [160], [162], [165].

There are many practical challenges connected with WLTBI; these comprise full contact burnin and competent thermal control [164]. Winning WLTBI process also needs during understanding of the thermal characteristics of the DUT. To keep the burn-in time to a least, it is necessary to test the devices at the superior end of their temperature cover [161]. Furthermore, the junction temperature of the DUT need to be maintain in a small window such that burn-in prediction are precise.

For scan testing Power management for WLTBI is very significant. The semi-conductor industry [166] is now extensively used in Scan-based testing. Although, scan testing leads to composite power profiles in test application; in exacting, there is a important variation in the energy usage of a device below test on a cycle-by-cycle basis. In a burn-in environment, the high inconsistency in scan power negatively affects prediction on burn-in time, ensuing in a device individual subjected to extreme or inadequate burn-in [167]. Erroneous prediction may also effect in thermal runaway. Dynamic burn-in using a fullscan circuit automatic test pat-tern creation (ATPG) was projected in [168] with the aim of increasing the number of transition in the scan chains.

Observation : In the semiconductor industry the maximum power usage of ICs in scan-based testing is a severe apprehension in the semiconductor industry; scan power is regularly some times more than the device power indulgence in normal circuit operation [169]. Too much power usage in scan testing can lead to yield defeat. As a consequence, power reduction during test pattern application has lately expected a lot Research has alert on model order to lessen test power [170], [176], [177]. The pattern-ordering difficulty has been mapped to the well-known wandering salesman trouble (TSP) [176], [177]. The burn-in at wafer-level needs low variation in power usage during testing semiconductor devices [161]. The need of WLTBI is not addressed in the test-pattern re-ordering method that reduces the dynamic power usage. Particular methods need to be urbanized to address this feature of low-power testing, i.e., the order of test model to reduce the overall variation in power usage.

11. IV.

12. Conclusion

As devices grow in gate count, scan test data volume and function time grows as well, even for singlestuck-at faults with single-detection. This causes a grave difficulty on manufacturing test because, as test data volume increase, it takes more tester memory to hold the whole test set, and longer to bring the test set through restricted test channels, both important to higher test cost. In addition, considerable research on low power design and testability of VLSI circuits have been shown that the power used in test mode of operation is frequently much higher than the power used in normal mode of process due to the high swapping action in the nodes of the circuit under test which may effect in increased dynamic power indulgence or higher supply current demand. This can reduce the dependability of the circuit under test due to high temperature and current solidity which cannot be tolerated by circuits designed using power reduction method. Unnecessary power indulgence may root hot spots that could harm the CUT. High supply current may cause extreme power supply droop causing to bigger gate delays that cause good chips to fail tests causing yield loss.

Many studies from academia and industry have revealed the need to minimize energy expenditure during test of digital and memory designs. This need is caused by the fact that generally test power could be more than twice the power used in normal functional mode. Since test throughput and developed yield are frequently exaggerated by test power, different test solutions have been projected over the past decade. In this chapter, we converse many low-power test solutions to tackle the above-mentioned problems. Both structural and algorithmic solutions are described the length of with their collision on parameters such as fault reporting, test time, area transparency, circuit show penalty, and design flow alteration. These explanations cover a wide spectrum of difficult environments, together with scan testing, scan-based BIST, test-per-clock BIST, test compression, and memory testing.

External testing with ATE becomes very expensive, as the complexity of modern chips increases. The BIST design technique has been extensively adopted in the design of VLSI circuits in order to facilitate the chip to test itself and to evaluate its answer with a satisfactory cost. On the other hand, the main problem in logic BIST methods is that energy utilization during BIST can go beyond the power rating of the chip or enclose. High average power can cause heat of the chip and high peak power can create noiserelated failures. It is predictable that low power test compression methods and functional test techniques need to be projected to like-minded with logic BIST effectively. of concentration [170], [171], [172], [173] [174], [175]