1. I. Introduction

he proliferation of digitizing the functions of private and public sectors, governmental organizations impetus to increase the repository capacity of the devices as well as the speed of accessing and retrieving the data through the internet. Owing to awareness and usage of the Internet and World Wide Web (WWW), a number of Internet users increased exponentially. Perhaps, a number of approaches have been developed with a consideration of the internet users and the difficulties in accessing the data available on the Internet and the WWW, they are not completely fulfilling the users' requirements. Specifically, images demand large amount of space and consume more time to retrieve from the image repository.

At the earlier stage, the text annotation based image retrieval method has been developed which is time consuming (Yap and Wu, 2015), and also it is not effective and efficient because it retrieves the images based on the text annotated manually by the user. Then, the content-based image retrieval (CBIR) method has been developed, which attracted a number of researchers. Most of the techniques have been developed based on the contents of the images, namely low-level global visual features, viz. colour properties, shape, texture, spatial orientation, etc., which are used as a query for the retrieval process (Huang et al., 1997;Stricker and Orengo, 1995;Pentland et al., 1994;Fuh et al., 2000). Liu, et. al. (2011) propose micro-structure Author ?: Division of Computer and Information Sciences, Faculty of Science, Annamalai University, Annamalainagar -608 002, Tamil Nadu, INDIA. e-mail: [email protected] Author ?: B.Tech (ECE), Pondicherry Engineering College, Pondicherry-605014. e-mail: [email protected] descriptor, which extracts and integrates colour, texture, shape and colour layout information for image retrieval. The authors claim that it has only 72 dimensions for full colour image. But this is too large compared to those of the methods proposed by (Seetharaman, 2015;Krishnamoorthi and Sathiya Devi, 2013). The work proposed in (Murala et al., 2012) encodes the relationship between reference pixel and its surrounding neighbors by comparing gray-level values, and it extracts the edge information on local extreme in different angles. Though, a number of works have been developed based on the statistical distributional approaches and parametric tests of hypothesis, most of them are not effective and efficient, since there is no guarantee that all the images are distributed as independent and identical to Gaussian random process. The statistical parametric tests can be applied on an image, if it is distributed to Gaussian process. Otherwise, it does not lead to a good result. Generally, the parametric tests can be used if the data are quantitative and are distributed as independent and identical to Gaussian process. This motivated to develop the proposed method.

This paper adopts the idea of Canonical correlation analysis (CCA) for image retrieval. It is an effective and efficient technique for both textured and structured images, since it does not strictly rely on the distributional process. The Correlation analysis is dependent on the co-ordinate system, in which the variables are described, so even if there is a very strong linear relationship between two sets of multidimensional variables, this relationship might not be visible as a correlation. The CCA seeks a pair of linear transformation for each set of variables such that when the set of variables are transformed, the corresponding co-ordinates are maximally correlated. It is one of the valuable multi-data processing methods (Sun, et al. 1994;Zhang, et al. 1999). In recent years, CCA has been applied to several fields such as signal processing, computer vision, neural network and speech recognition.

In recent years, there has been a vast increase in the amount of multimedia content available both in off-line and online, though it is unable to access or make use of this data unless it is organized in such a way as to allow efficient browsing. To enable content based image retrieval with no reference to labeling, this paper attempts to learn the semantic representation of the images, and their associated text. This paper presents a general approach using KCCA that can be used for content based (David and John, 2003) as well as region based retrieval (Fyfe and Lai, 2001;David and John, 2003). In both cases, the KCCA approach and the Generalized Vector Space Model (GVSM) are compared, which aims at capturing some term to term correlations by looking at co-occurrence information.

The canonical correlation is the most general multivariate form, because multiple regression, discriminant function analysis and MANOVA are the special cases of the Canonical correlation analysis. Thus, it yields better results. The one more advantage of using canonical correlation is that there is no specific requirement for the data that should follow the normality conditions, if it is a descriptive analysis.

2. II. Proposed Retrieval Method

and the linear combination of the feature vectors i y of the target image is

n n 2 2 1 1 t y y y I f f f + + + = ?(2)The first canonical correlation is the maximum among the correlation coefficients between the variates q I and t I that is considered as the canonical correlation coefficient between the query and target images.

The function to be maximized is

) I S , I (S max t y q x I , I t q corr = ? t y q x t y q x I , I I S I S I S , I S max t q = ? If [ ] ) y , x ( f ? denote the empirical expectation of the function, ) y , x ( f , then [ ] ? = = ? N 1 i i i ) y , x ( N 1 ) y , x ( f fNow, the correlation expression can be rewritten as in equation ( 3)

[ ] ? ? ? ? ? ? = ? ? ? ? 2 t 2 q t q t q y , I E x , I E y , I x , I E ) I , I max( (3) [ ] [ ] [ ] t ' t q ' q t ' q t q I ' yy I E I ' xx I E I ' xy I E ) I , I max( ? ? ? = ? (4) Follows that [ ] [ ] [ ] t ' t q ' q t ' q t q I ' yy E I I ' xx E I I ' xy E I ) I , I max( ? ? ? = ? (5) C Cyy Cyx Cxy Cxx y x y x E ) y , x ( C ' = ? ? ? ? ? ? = ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? = ? (6)The total covariance matrix C is a block matrix, where the within-sets covariance matrices are xx C and , yy C and the between-sets covariance matrices are

' yx xx C C = .Thus, we can rewrite the function, ? as

t yy ' t q xx ' q t xy ' q t q I C I I C I I C I ) I , I max( = ? (7)The maximum canonical correlation is the maximum of ? with respect to q I and t I . Statistical hypothesis:

0 ) I , I ( : H 0 ) I , I ( : H t q t q 0 ? ? = ? ? ) 1 ( ) 1 n m ( 2 1 1 p 2 2 j ) n , m min( 1 j ? = ? ? ? ? ? ? ? ? ? + + ? ? ? = ? ln (8)Which is asymptotically distributed as a Chisquared with

3. IV. Measure of Performance

In order to measure the performance of the proposed method, the F ? -measure is adopted, which is a harmonic mean of precision (P) and recall (R) values, and that are given in equations ( 9), (10) and (11). The F ? , measures the effectiveness of retrieval with respect to a user who attaches ? times as much importance to recall as precision (Van Rijsbergen, 1979). The ? value is decided with respect to weight which is given to the P ? is considered as 0.5, since more weight is given to the precision. Thus, equation ( 9) is rewritten as in equation (12).

( ) ( ) ) R ( P ) R ( P ) 1 ( measure F 2 2 + × ? ? × ? + = ? (9)Where, In the context of the proposed research, the ? is considered as 0.5 since more weight is given to the precision. Thus, equation ( 9) can be written as in equation ( 12).

4. { } { }

5. V. Experiments and Results

A colour image is given as input key image to the system, and the shapes in the image are segmented; the segmented image is modelled to HSV colour space. The texture features are extracted from the V component of the HSV colour space. The colour features, such as H, S and V, and the texture feature are considered as observation and used in the CCA method expressed in equation (7). For a sample, due to space constraints, a shape segmented colour image is presented in Fig. 1. In order to experiment the CCA method discussed in section 2, the expression in equation ( 7) is implemented with the image and their feature databases constructed and discussed in section 4 that measures the correlation between both query and target images. Due to space constraints, for a sample, some of the images considered from the image database are presented in this paper that have been included during the experiments.

The target images are identified and marked in the image database, based on the results obtained by experiments. According to the users' requirements, the user can fix the level of significance of the tests by which the user can retrieve a number of same or similar images. The proposed system matches and retrieves the same image (target and query images are the same) from the image feature database at the level of significance 0% or 0.001 (100% accuracy -same images); almost the same images for 1% to 2% (99% to 98% accuracy) level of significance; similar images for the level of significance from 3% to 5%; related images for the level of significance from 6% to 8%. The selected images are indexed (ranked) from lowest to highest, i.e. in an ascending order based on the test statistic values obtained. The image which corresponds to the first value in the indexed list is marked as target image and it is retrieved from the image database. In order to validate the proposed system, the image in column 1 of Figure 1(a) is given as input query image, for which the system retrieves the images in row against them. The retrieved output images show that the proposed CCA method is robust for scaling and rotation for both textured and structured images. Since the proposed system serves same as the distributional approach, the proposed system acts as invariant for rotation and noise.

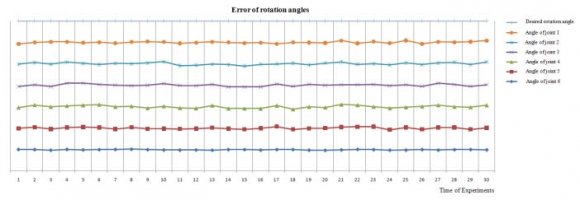

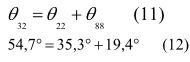

The experiment is conducted at various levels of significance on the input query Wall Street Bull image given in Figure 2(a). The images in column 2 of the Figure 2(b) are retrieved at 2% level of significance; images in columns 3, 2 and 1 are retrieved at 5% level of significance; at 8% and 12% level of significance, the system retrieves the images in columns 4, 3, 2, 1 and 7 respectively; at 15% level of significance, all the images presented in Figure 2(b) are retrieved. The number of shapes in both query and target images are compared, if it matches, then it is emphasized that both the images are same or similar. The obtained output of the experiments, based on the canonical correlation, is tabulated in Table 1; it can be observed from the output that the proposed CCA yields 0.9982 for the images Wall Street Bull-1 versus Wall Street Bull -1, i.e. the query and target images are same. Similarly, the canonical correlation coefficients are obtained for different combinations of the Bull images are presented in Table 1. The computed canonical correlation coefficient matrix is a symmetric. The main advantage of the proposed system is that it facilitates the user to retrieve only the required number of images by fixing the level of significance, whereas the existing methods retrieve a number of similar images. Thus, in the existing methods, the user has to expend time to select the required image from the set of retrieved same or similar images. In addition to that the proposed system retrieves a set of similar images by fixing the level of significance at a desired level. Furthermore, to prove the efficacy of the proposed method, a number of structured images are considered for the experiment. For a sample, a few of them are presented in Figure 3. The image given in Figure 3(a) is fed as input query to the system, and the level of significance is fixed at 0.08 (i.e. 8 percent); for which the system retrieves the images presented in row 1. The images in row 2 are retrieved, while the level of significance is fixed at 0.12 or lesser (i.e. 12 percent or lesser), whereas it retrieves the images in rows 3 and 4 at the level of significance 0.15 The obtained results reveal that the proposed method outperforms the existing systems. But, this paper takes account of texture and structure images. Averages of the precision and recall values are calculated for various methods: OP, BD and MD measures, and the results are presented in Table 2. Bar chart is plotted for the observed results, and is presented in Figures 5.

6. Time Complexity

The performance of the proposed system is compared with the existing systems: OP method, BD and MD distance measures in terms of computational time complexity. The system is implemented using Java SE 7 compiler with the system specification, Intel Core i5-4440 processor based PC with 4GB DDR3 RAM. The time consumed by the proposed system for feature extraction, matching and retrieving the images is measured in terms of seconds after a rigorous experimentation, and the obtained results are presented in Table 3. The proposed system demands lesser time compared to that of the existing systems, and also the proposed system yields better retrieval results.

7. VI. Conclusion

In this paper a novel technique, Canonical correlation analysis and the Chi-square test are employed. The Chi-square test tests the correlation coefficient between the query and target images that whether it is significant or not. The canonical correlation coefficient extracts the spatial relationship among the pixels in the image, by which the spatial orientation of the texture properties are extracted; and the properties between the query and target images are tested. If it is significant, then it is concluded that the input query and target images are same or similar; otherwise, it is inferred that the two images differ significantly. It is observed from the results that the proposed technique yields better results than the existing techniques J e XV Issue VI Version I

| (m | - | i | + | 1)(n | - | i | + | 1) | degrees freedom for | |||||||||

| large sample size, N. If | ( ? | I | q | , | I | t | ) | < | ? | 2 ? | , then the two | |||||||

| images belong to the same class; otherwise, the two images are different, where ? is the level of | ||||||||||||||||||

| significance (Kanti et al., 1979). | ||||||||||||||||||

| Therefore, totally there are [1932 (actual image) + ((16 | ||||||||||||||||||

| × (695+682)) + (4676 + 3336 + 558 + 537)) × 3 | ||||||||||||||||||

| (rotated through 90 o , 180 o and 270 o ) +30,289 (scaled) | ||||||||||||||||||

| + 626 (satellite)] = 1, 28, 482 images. Based on these | ||||||||||||||||||

| image collections, an image database and FV database | ||||||||||||||||||

| are constructed. | ||||||||||||||||||

| Bull images | |||||

| Vs. | Bull-1 | Bull-2 | Bull-3 | Bull-4 | Bull-5 |

| Bull-1 | 0.9999 | 0.9881 | 0.7506 | 0.7457 | 0.6152 |

| Bull-2 | 0.9881 | 0.9998 | 0.7561 | 0.7389 | 0.5896 |

| Bull-3 | 0.7506 | 0.7561 | 0.9999 | 0.7931 | 0.6856 |

| Bull-4 | 0.7457 | 0.7389 | 0.7931 | 0.9998 | 0.7953 |

| Bull-5 | 0.6152 | 0.5896 | 0.6856 | 0.7953 | 1.0000 |

| Image Database | Proposed system (? is at 0.12 and above) | OP | BD | MD | ||||||||

| R | P | F 0.5 | R | P | F 0.5 | R | P | F 0.5 | R | P | F 0.5 | |

| CalTech | 0.9585 0.7952 0.8233 0.9015 0.7631 0.7873 0.8523 0.6937 0.7205 0.8252 0.6817 0.7063 | |||||||||||

| Corel | 0.9098 0.8585 0.8683 0.9255 0.8039 0.8256 0.7829 0.7736 0.7754 0.8095 0.6912 0.7120 | |||||||||||

| Structure | 0.9681 0.7852 0.8160 0.8762 0.7729 0.7916 0.8139 0.6483 0.6758 0.8069 0.6275 0.6567 | |||||||||||

| Images | ||||||||||||

| Time Consumption | Proposed System | OP | BD | MD |

| Feature | ||||

| extraction time | 0.485 sec | 0.798 sec | 0.975 sec | 0.852 sec |

| Searching time | 0.0581 sec | 0.072 sec | 0.070 sec | 0.069 sec |